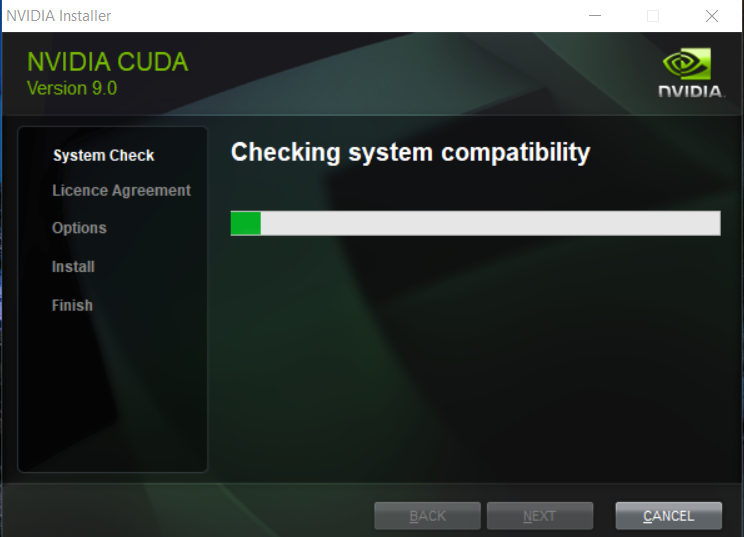

Getting started with CUDA and the content of this extensive packageįirstly, you can get access to the CUDA Toolkit package offered by NVIDIA by downloading an extensive executable or with the help of an installer that allows customizing the installation process by allowing you to choose the desired set of tools that you want to add to your newly-created development ecosystem. NVIDIA Performance Primitives (NPP) libraryĬUDA Toolkit for RedHat Enterprise Linux 5.5ĬUDA Toolkit for RedHat Enterprise Linux 4.NVIDIA CUDA Toolkit is a powerful development package for developers, testers, scientists, and researchers who aim at creating flexible, fast, and scalable applications. Notebook Developer Drivers for WinVista and Win7 (260.99)ĬUDA Developer Guide for Optimus PlatformsĬUDA Toolkit Build Rules Patch for Windows Notebook Developer Drivers for WinXP (260.99) More recent production driver packages for developers and end users may be available at For additional tools and solutions for Windows, Linux and MAC OS, such as CUDA Fortran, CULA, CUDA-GDB, please visit our Tools and Ecosystem Pageĭownload Quick Links Windows XP, Windows VISTA, Windows 7 Description of Downloadĭeveloper Drivers for WinVista and Win7 (263.06) Note: The developer driver packages below provide baseline support for the widest number of NVIDIA products in the smallest number of installers. Please refer to the CUDA Toolkit 3.2 Readiness Tech Brief for a summary of these changes. In CUDA Toolkit 3.2 and the accompanying release of the CUDA driver, some important changes have been made to the CUDA Driver API to support large memory access for device code and to enable further system calls such as malloc and free. Windows developers should be sure to check out the new debugging and profiling features in Parallel Nsight v1.5 for Visual Studio at Please refer to the Release Notes and Getting Started Guides for more information. SimpleSurfaceWrite, demonstrating how CUDA kernels can write to 2D surfaces on Fermi GPUs Vflocking Direct3D/CUDA, which simulates and visualizes the flocking behavior of birds in flight SLI with Direct3D Texture, a simple example demonstrating the use of SLI and Direct3D interoperability with CUDA CĬudaEncode, showing how to use the NVIDIA H.264 Encoding Library using YUV frames as input

Simple Printf, demonstrating best practices for using both printf and cuprintf in compute kernelsīilateral Filter, an edge-preserving non-linear smoothing filter for image recovery and denoising implemented in CUDA C with OpenGL rendering Interval Computing, demonstrating the use of interval arithmetic operators using C++ templates and recursion Several code samples demonstrating how to use the new CURAND library, including MonteCarloCURAND, EstimatePiInlineP, EstimatePiInlineQ, EstimatePiP, EstimatePiQ, SingleAsianOptionP, and randomFogĬonjugate Gradient Solver, demonstrating the use of CUBLAS and CUSPARSE in the same applicationįunction Pointers, a sample that shows how to use function pointers to implement the Sobel Edge Detection filter for 8-bit monochrome images New NVIDIA System Management Interface (nvidia-smi) support for reporting % GPU busy, and several GPU performance counters Support for memory management using malloc() and free() in CUDA C compute kernels Support for debugging GPUs with more than 4GB device memory NVCC support for Intel C Compiler (ICC) v11.1 on 64-bit Linux distros Multi-GPU debugging support for both cuda-gdb and Parallel NsightĮxpanded cuda-memcheck support for all Fermi architecture GPUs

New support for enabling high performance Tesla Compute Cluster (TCC) mode on Tesla GPUs in Windows desktop workstations Support for new 6GB Quadro and Tesla products H.264 encode/decode libraries now included in the CUDA Toolkit New CURAND library of GPU-accelerated random number generation (RNG) routines, supporting Sobol quasi-random and XORWOW pseudo-random routines at 10x to 20x faster than similar routines in MKL

New CUSPARSE library of GPU-accelerated sparse matrix routines for sparse/sparse and dense/sparse operations delivers 5x to 30x faster performance than MKL Release Highlights New and Improved CUDA LibrariesĬUBLAS performance improved 50% to 300% on Fermi architecture GPUs, for matrix multiplication of all datatypes and transpose variationsĬUFFT performance tuned for radix-3, -5, and -7 transform sizes on Fermi architecture GPUs, now 2x to 10x faster than MKL Individual code samples from the SDK are also available.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed